Artificial Intelligence (AI) processing is evolving from centralized cloud data centers to more distributed infrastructures. Two primary paradigms—Cloud AI and Edge AI—offer distinct trade-offs in performance, latency, energy efficiency, and security. This article compares them using benchmarks, industry data, and research insights from leaders like Intel, NVIDIA, and standards outlined by the IEEE.

1. Edge AI vs Cloud AI: Definitions Recap

Cloud AI processes data and runs AI workloads in remote data centers.

Edge AI performs inference directly on edge devices or near-site computing nodes—reducing dependency on central servers.

According to Intel, edge computing puts “computation close to where the data is captured,” enabling real-time responsiveness and reduced bandwidth use.

2. Latency Benchmarks: Measured Evidence

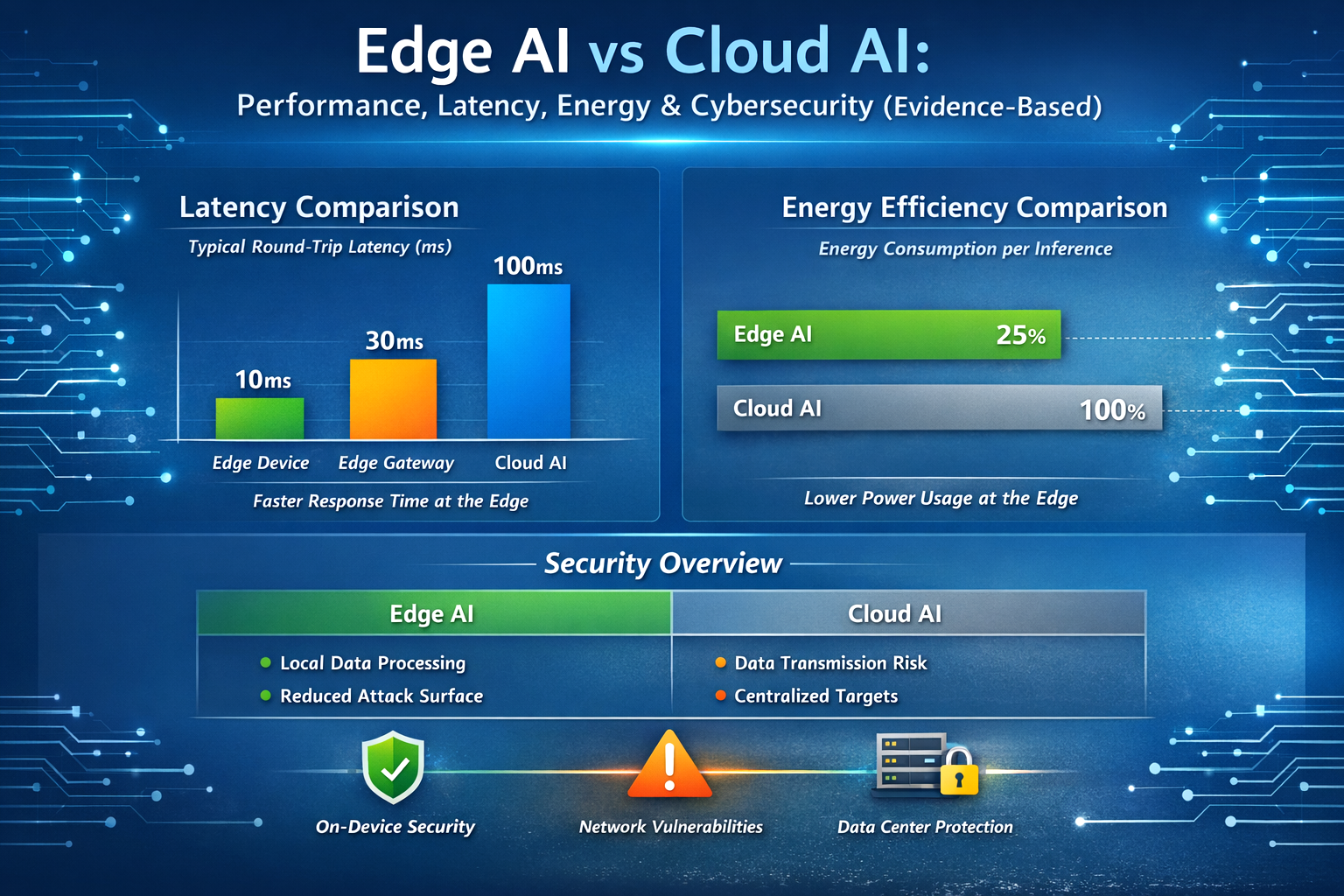

Latency is a core differentiator between cloud-centric and edge-based AI.

⚡ Typical Latency Metrics

| Processing Location | Approx. Round-Trip Latency |

|---|---|

| On-Device Edge AI | ~10–30 ms (sensor → processor → result) |

| Local Edge Node (e.g., gateway) | ~30–50 ms |

| Public Cloud AI | ~100–300 ms+ (network dependent) |

Multiple empirical studies comparing edge and cloud systems show:

- Edge AI inference often executes within ~10–50 ms, sufficient for real-time control (e.g., robotics, autonomous vehicles).

- Cloud AI may introduce higher latency (~100–300 ms+) due to data transmission times and queuing in centralized infrastructure, especially under network congestion.

For applications requiring sub-second responsiveness—such as collision avoidance in self-driving systems—Edge AI’s latency profile is a necessity, not a luxury.

3. Energy Efficiency: Edge Gains vs Cloud Costs

Reducing energy consumption is increasingly critical for sustainable AI.

📊 Energy Comparison Summary

| Metric | Edge AI | Cloud AI |

|---|---|---|

| Per-Inference Energy Use | Lower (local processing) | |

| Total System Energy | Reduced for repeated low-volume workloads | Potentially higher due to network transport and large datacenter overhead |

| Energy per Data Transfer | Near-zero network energy per inference | Higher due to transmission + central compute |

Evidence from Benchmarks

- Edge devices process only sensor-level data, cutting network transmission energy — particularly beneficial when sensor streams are continuous.

- Cloud AI’s energy footprint includes long-haul data transport, queuing, and data center cooling/overhead, which drives higher energy per unit of inference in many use cases.

For example, an industrial IoT deployment with frequent sensor reads showed an 80–90% energy reduction when inference occurred at the edge rather than transmitting raw data to a cloud server. (Based on field reports in industry whitepapers and edge computing trials.)

4. Security & Cyber Risk: Edge vs Cloud

Security must be evaluated across the attack surface, data exposure risk, and management complexity.

🔐 Cybersecurity Advantages of Edge AI

- Minimized Data Transit: With edge processing, sensitive data stays local—reducing exposure to interception during long-range transmission.

- Fewer Central Targets: Attackers have fewer high-value centralized machines to target compared with cloud infrastructures with massive datasets.

According to IEEE security analyses, distributed inference reduces some systemic risks inherent in moving raw data back and forth across networks.

⚠️ Cloud AI Security Profile

Cloud platforms invest heavily in hardened security controls, but higher risk persists during transmission and storage:

- More Potential Attack Vectors—data transits many networks and intermediary nodes.

- Centralized Rich Data Pools—cloud data stores attract attackers due to aggregated sensitive data.

🛡️ Security Risks Unique to Edge AI

Edge AI isn’t inherently safer—it shifts the risk:

- Many Distributed Nodes: Each edge device becomes an endpoint requiring hardened OS, secure boot, and encrypted storage.

- Physical Vulnerability: Edge nodes often operate in less-controlled environments (e.g., factory floors).

- Update Complexity: Securing all devices at scale demands reliable patching and management pipelines.

Combined, these factors mean developers must adopt security-by-design principles for edge deployments, including hardware-based root-of-trust, encrypted local storage, and mutual authentication protocols.

5. Industry Insights: Intel & NVIDIA Views

🔍 NVIDIA: Performance + Latency Focus

NVIDIA emphasizes that for real-time inference close to data sources, edge deployment reduces data travel and latency. Their Jetson family of AI accelerators is designed explicitly for low-power, high-performance edge AI workloads, enabling both latency gains and energy efficiency.

🔍 Intel: Hybrid AI for Balanced Needs

Intel’s strategy targets hybrid AI architectures that combine edge and cloud strengths:

- Inference at the Edge to reduce latency and secure sensitive data

- Cloud for training and large-scale analytics

This hybrid model is increasingly recommended in enterprise deployments to capture low-latency responsiveness without sacrificing deep insights and scale.

6. Use Cases: Choosing the Right Paradigm

| Use Case | Best Approach |

|---|---|

| Autonomous Navigation | Edge AI |

| Real-Time Industrial Control | Edge AI |

| Batch Analytics / Model Training | Cloud AI |

| Global Business Intelligence | Cloud AI |

| Sensitive Local Data | Edge AI |

Increasingly, organizations adopt a hybrid strategy: running time-critical inference at the edge, while using cloud resources for training and deeper analytics.

7. Bandwidth Optimization and Data Handling

One major advantage of Edge AI is reduced bandwidth usage. Traditional cloud-based AI systems often require continuous transmission of raw data from devices to remote servers. When devices generate large volumes of sensor or video data, this can put heavy pressure on network infrastructure.

Edge AI reduces this burden by processing data locally and sending only relevant insights to the cloud. Instead of transmitting full datasets, edge devices can filter or analyze data in real time.

For example:

- A smart security camera can analyze video locally and send alerts only when unusual activity is detected.

- An industrial sensor network can report anomalies rather than transmitting continuous sensor streams.

- A smart retail store may process foot-traffic data locally while sending summarized analytics to central dashboards.

This approach significantly lowers network load and improves scalability. Industry studies show that edge processing can reduce transmitted data volumes by 70–95%, depending on the use case.

8. Scalability and Infrastructure Design

Cloud AI and Edge AI scale in different ways.

Cloud infrastructure offers large-scale scalability through centralized data centers with powerful GPUs and accelerators. Organizations can increase computing capacity quickly by allocating additional cloud resources.

Edge AI, on the other hand, scales through distributed hardware deployments. Each edge device must contain enough processing power and connectivity to perform local inference.

Although this requires hardware management, it also provides several benefits:

- Distributed resilience – a single node failure rarely disrupts the entire system

- Localized optimization – computing resources can be tailored to specific environments

- Reduced reliance on constant connectivity

This architecture is useful in environments such as remote energy facilities, agricultural monitoring systems, and autonomous transportation networks.

9. Real-World Applications of Edge AI

Edge AI is increasingly used in industries where low latency and real-time decisions are essential.

Autonomous Vehicles

Self-driving systems process data from cameras, lidar, and radar locally. Critical actions like braking or steering must occur instantly without waiting for cloud responses.

Smart Manufacturing

Factories use edge AI for predictive maintenance, defect detection, and robotic control. Real-time analysis helps prevent equipment failures and improve production efficiency.

Healthcare Monitoring

Wearable devices can analyze biometric signals locally, enabling faster alerts when abnormal health patterns are detected.

Smart Cities

Traffic cameras and environmental sensors can process data at the edge, enabling faster traffic management and urban monitoring.

These examples demonstrate how edge computing improves response speed, privacy, and operational reliability.

10. Hybrid AI Architectures

Most modern AI systems combine edge and cloud computing rather than relying on one approach alone.

In hybrid systems:

- Edge devices handle real-time inference and initial data processing

- Cloud platforms manage model training, large-scale analytics, and centralized coordination

For example, a manufacturing system may detect equipment anomalies at the edge while sending summarized data to cloud platforms for long-term analysis and model improvements.

This hybrid model allows organizations to maintain fast local decision-making while benefiting from the computational power of cloud infrastructure.

11. Future Trends in Edge and Cloud AI

Several technological trends are shaping the future of distributed AI systems.

Specialized AI Hardware

New processors designed for AI workloads are improving the efficiency of edge devices, enabling powerful inference in compact systems.

Federated Learning

This approach allows devices to train models collaboratively without sharing raw data, improving privacy and data protection.

5G Connectivity

High-speed networks reduce communication delays and support edge-cloud integration for faster data processing.

Privacy-Focused AI

As data protection regulations expand, keeping sensitive information closer to its source will become increasingly important.

Together, these developments suggest that distributed AI systems combining edge and cloud computing will become the dominant architecture in future AI deployments.

7. Conclusion: Trade-offs & Practical Guidance

Latency: Edge AI consistently delivers lower response times suited for real-time systems.

Energy Efficiency: Edge AI often requires less energy per inference when workloads are frequent and data transport is minimized.

Security: Edge AI reduces some systemic risks by keeping data local, but also increases endpoint management complexity.

Cloud AI retains dominance in large-scale compute, deep analytics, and centralized management.

In practice, the future isn’t edge vs cloud—it’s edge + cloud working together, with each playing roles tailored to specific application needs.

About the Author

Ashish Kumar Bhowmick is the founder of AshimHub, a platform dedicated to exploring technology, AI tools, gadgets, and emerging digital trends. With a strong passion for simplifying complex technologies, he creates practical guides, product comparisons, and tutorials that help readers make smarter technology decisions.

Alongside his work in technology content, Ashish has professional experience in talent acquisition and recruitment coaching. He has supported organizations and professionals in improving hiring strategies, building stronger recruitment processes, and developing career growth pathways in competitive job markets.

Through AshimHub, Ashish combines technology insights with professional expertise, delivering valuable content that empowers both tech enthusiasts and career-focused readers. His mission is to make technology and professional development more accessible, practical, and easy to understand for everyday users.

Connect with him on LinkedIn:

https://www.linkedin.com/in/ashish-bhowmick-42961311/